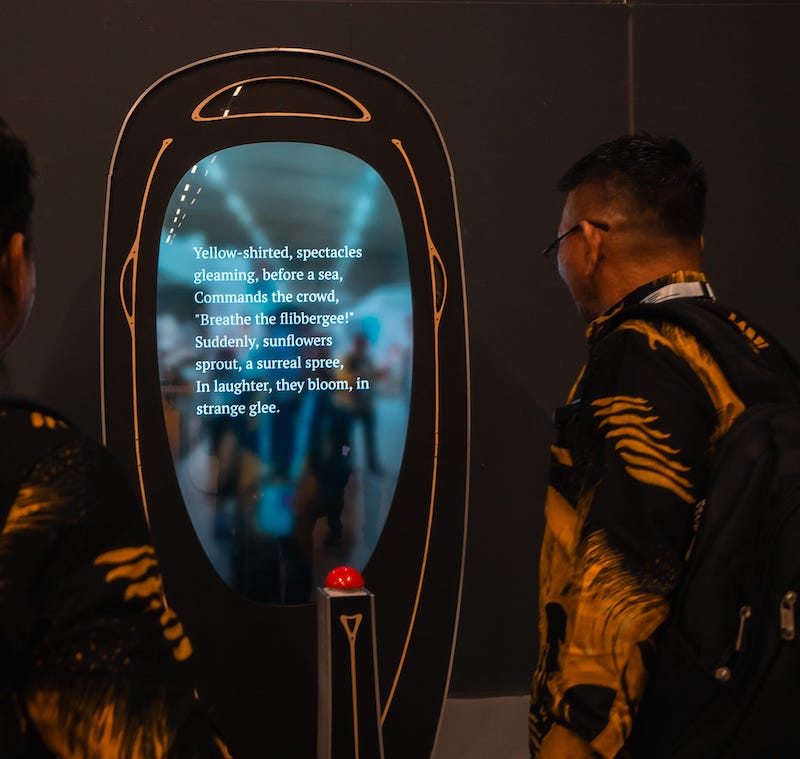

At this years Lowlands festival and IFLA World Library Congress we presented the Poem Booth, an installation that takes an image of you and the surroundings and creates a beautiful unique poem. The Poem Booth is a collaboration between VOUW and Little Robots. VOUW created the concept and the physical Poem Booth, and Little Robots has created the software and technology used.

TL;DR

This is going to be a longer post so let me give you the summary:

- Poem Booth started as a hackathon project: a web-app prototype running on a single laptop. At Lowlands we presented the pyhsical Poem Booth installation that is running on a Android TV hardware.

- The Android app is fully managed and running in kiosk mode, for which I created a small library dubbed kiosk kit. Kiosk kit takes care of locking down the device and app updates. Poem Booth also receives real time configuration updates.

- The api doing the image to text translation is hosted on Cloud Run. Hugging Face inference endpoints are used for faster inference. These GPU endpoints can also be turned off to save on costs.

- For device configuration, testing and authoring ChatGPT prompts a web app was created using Sveltekit. This allows us to configure each Poem Booth and make it unique.

- The results of the Poem Booth are published to poems.poembooth.com in real time. You can see all Lowlands and the English IFLA WLIC poems.

How it started

In February of this year, Justus Bruns pitched the idea of the Poem Booth an AI-themed hackathon that I was attending. Amoungst all other ideas pitched, it struck me. It wasn’t about being more efficient or making money, the idea of the Poem Booth is to give you a moment of joy, creating your personal poem. At the same time, the interesting question is also if this robot-poet can actually create interesting or touching poems. Anyway, it sounded like a fun challenge for the hackathon and Justus, Thijs and me teamed up and started brainstorming.

The first version of the Poem Booth

The first version was functional, but (obviously) not a finished product. It ran everything locally on my laptop. I created a tiny API that would accept an image, and would then use imaging caption model we found on Hugging Face to create an image caption. With the output of that model, we would ask OpenAI’s davinci model to write us a haiku. Because creating an Android app talking to my laptop on the local wifi would probably be too complicated, I settled on creating a web application using Sveltekit. We had something working in a few hours and worked on squashing bugs, testing and styling it. Everybody we showed it was very enthousiastic and (to my surprise) we actually won the the “best artistic” category prize!

A an art installation running Android

For this project, using Android made a lot of sense. First, it allowed us to prototype with different device types. The same app runs on phones, tablets and tv’s. We thought about using a tablet as a basis for the installation at first, but we settled on using and Android TV device so that we can project to larger screens. Using Android allows us to fully use the camera. Earlier prototypes used MLKit to detect the presence of a user, for example. While we eventually didn’t use that feature, the Poem Booth still leverages MLKit for initial configuration using QR codes.

The UI of the Poem Booth is written in Jetpack Compose. This gives us great APIs for animation, measuring text and dynamic updates to the UI based on state. During development of the Poem Booth this was really great as it allows us to update the UI just by changing the configuration on the fly.

To generate a poem, you hit the big red button. To get this trigger into Android, a Raspberry Pi pico microcontroller is connected to the Android TV box over USB. The pico is configured as a hid device, aka a keyboard. When you press the button the pico translates this into a press on the enter key, which is handled in the Android app just like any other keyboard input. This setup is very simple and allows for other input in the future as well.

Management and updates

Unlike most Android devices, the Poem Booth runs a single app, all the time. This is commonly referred to as kiosk mode. It is important to use kiosk mode for the Poem Booth, even though the user can not really mess with the UI. Kiosk mode also prevents unexpected / unwanted dialogs from other apps for example.

To run in kiosk mode, the Poem Booth app runs as a launcher and a device owner. The kiosk functionality has been developed as a seperate library, with the working title Kiosk kit. Kiosk kit takes care of configuring the app as a device owner, granting

all required permissions and over the air app updates. It also provides management APIs to enable or disable adb debugging and configuring WIFI networks, something that has been restricted to device owner apps in Android in the past years. Adding kiosk kit

is very simple as well, adding the dependency is enough to have the app being configured with the basics.

Kiosk kit can used in other products that need a managed app like this, without needing a fully fledged MDM solution.

Image to text to poems

The Poem Booth app uses the API to “convert” an image to a poem, and it also uses this API to receive configuration updates. It checks in on regular intervals or when a push notification pings the Poem Booth. This allows for almost immediate configuration updates.

The first version of this API ran on my laptop only. Today it is an authenticated API deployed to Cloud Run. We use Hugging Face inference endpoints to speed up the image to text generation. One of the challenges of running machine learning models in the cloud for a small project like this are the cost. Inference endpoints are awesome, but even the cheapest instance would cost us $400+ a month if we kept it running all the time. Therefore the inference can also run on Cloud Run on the CPU. This is slower but still OK, especially during testing and development. Our current setup actually checks if the Huggingface endpoints are up and running and falls back to Cloud Run if they aren’t.

Authoring prompts and configuration

The basic mechnism that we are using is that a generated caption generated by the image to text model is the input for a prompt to generate an ai poem. To author and test these prompts, a web application was created similar to the OpenAI playground, but tailored to the Poem Booth. In this web app we can store various configurations, which are the prompts we can use for a particular Poem Booth. It also allows us to test these prompts at the target “temperature” and at temperature 0. For those not familiar, the temperature in relation to ChatGPT more or less controls how random the output is. For testing prompts, testing at temperature 0 gives fairly consistent results, allowing to refine the prompt as we work on it. When the prompt is actually used by the Poem Booth, we use a different (higher) temperature, so that the Poem Booth gets more “creative”. The web app also has basic version control so that we can go back to other versions of the prompt we are working on.

The hackathon version of the Poem Booth generated haikus. For the actual Poem Booth we wanted to see if this machine could produce actual, legit, poetry. Justus got in touch with Maarten Inghels, a Flemish poet and artist who is as excited by the project as we are, and he offered to help us. It was really interesting to see how Maarten took the initial prompts to the next level by applying his knowledge as a poet. The web application allows Maarten or other poets to work on the prompt and try different things as well. Having a tool like this that Maarten can use himself, is very important. Slight changes in the prompt can yield to very different results, so immediate feedback is essential. As we learn what a good way is to create prompts for poems, it is likely that this interface can be further simplified as well, using templates for example.

Testing and validation

As an engineer, I’d like to write tests and validate results. When the output is poetry though, this is not as simple, since there are no set rules on what makes a good poem. To get some insights into the “quality” of the poems though, I created a small tool that can compare multiple prompts against the same captions (the output of the image to text step). This way we can compare the prompts and get a feel of which one is better, more exciting, more fun, more touching or whatever aspects we’re looking for.

Next steps

It was amazing to see the reactions to the Poem Booth at Lowlands and IFLA World Library Congress. At the IFLA conference we showed the Poem Booth in English, but Justus also asked ChatGPT to translate the poems into the local language of the visitors, such as Korean, Chinese, Arabic and even Swahili. People really enjoyed these translated poems as well. I can only imagine that authoring the prompt with the help of local poets in these languages we could further improve these poems.

We want to bring the Poem Booth into public spaces, such as festivals, libraries, town squares etc. If you are interested in this, please do reach out! More info and contact details can be found here.

Note: After the initial publication of this post, VOUW and Little Robots have signed an agreement for VOUW to aquire the rights to the Poem Booth software.